Large File control in Git using Gitea

I had read about Git Large File Storage (LFS) and seen it in action in some GitHub repositories (especially lately with all the LLM gigabyte files) but I hadn't needed this until this week. Read on to see what I learned.

The Context

I'm a big fan of hosting my data. I don't like putting my code, my knowledge, and my databases on public services. I mean, don't get me wrong, those services have a lot of value, make your life a lot easier, and are the correct solution in some cases, but, in general, I prefer to have my data on servers I control.

That is why we host our code using Gitea.

This week I finished the first part of a project to evaluate the recommended maximum size of data that we can manage in a coreBOS install. I created a couple of databases with 32 million records and 5000 users. These databases are 23 Gigabytes!

Now, I needed LFS in Git. So I set out to see how I could do that with Gitea.

Configuring Gitea

The first step was to see how I had to configure Gitea to work with large files. I found this page which seemed easy and proceeded to check the configuration of our installation.

We have Gitea running with docker-compose and, from what I saw in the directory layout and configuration file, everything was ready to work.

[server]APP_DATA_PATH = /data/giteaLFS_START_SERVER = trueLFS_CONTENT_PATH = /data/git/lfs

Create a repository and push

Since it was configured I thought that I could just create a repository and push the large files there, expecting Git/Gitea to magically understand that those 23Gb files needed to be set to LFS.

Wrong!

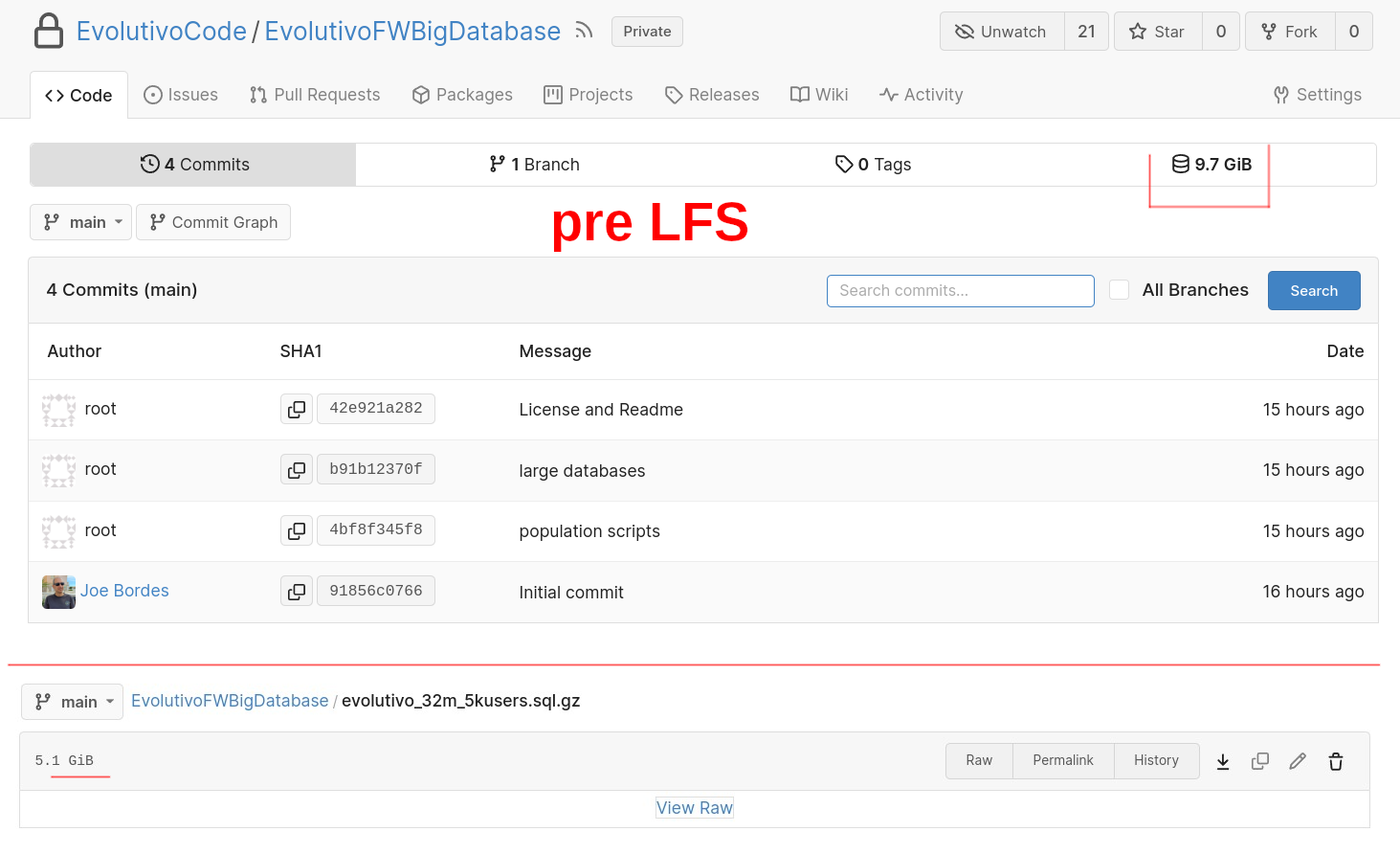

I created the repository, added the files, and pushed just to see that the databases had been versioned as normal files. Not bad really, it is impressive that Gitea would accept that size of files. So a "tip of the hat" for them, but not what I was looking for.

How to use Git LFS?

The next step in my journey was to start reading and learning about Git LFS. Constantly learning...

After reading the documentation (see references below), I understood that I had two ways forward. One was to trash the repository and start again by doing it right (a valid option in this case because it is a new project and only has 4 commits) and the second was using the lfs migrate command.

Well, I wasn't going to lose the opportunity to learn something new at this point so I decided to do both.

LFS Migrate

First I had to install git-lfs. I opted to use the system apt-get install git-lfs command. That installed version 3.0.2 on my Ubuntu 22.04.2 LTS.

Then I executed the git lfs install to perform the required global configuration changes.

root@xxx:/.../EvolutivoFWBigDatabase# git lfs install Updated git hooks. Git LFS initialized.

Now I was ready for the migrate command, so inside the incorrect repository, I executed

git lfs migrate import --include="*.sql.gz" --everything

This command took about 12 minutes with this output

migrate: Sorting commits: ..., done. migrate: Rewriting commits: 100% (4/4), done. main 42e921a2827b3bfa1deee502ee841bec562761b7 -> c824d6a0c8f85931c2ec2385f3428b271a2b003c migrate: Updating refs: ..., done. migrate: checkout: ..., done.

A git status now shows that the 4 commits I had have been changed:

root@xxx:/.../EvolutivoFWBigDatabase# git statusOn branch main Your branch and 'origin/main' have diverged,and have 4 and 4 different commits each, respectively.(use "git pull" to merge the remote branch into yours)

nothing to commit, working tree clean

I tried to push the changes and got this error:

root@xxx:/.../EvolutivoFWBigDatabase# git push ! [rejected] main -> main (non-fast-forward) error: failed to push some refs

Not nice. This means that LFS rewrote the whole history of the project and now there is no commit in common between my local repository and the online one. Some more investigation and I find this magic command, so I can force a merge. Let's see what that does:

root@xxx:/.../EvolutivoFWBigDatabase# git pull --allow-unrelated-histories warning: Cannot merge binary files: evolutivo_32m_5kusers.sql.gz (HEAD vs. 4..) warning: Cannot merge binary files: evolutivo_32m_11users.sql.gz (HEAD vs. 4..) Auto-merging evolutivo_32m_11users.sql.gz CONFLICT (add/add): Merge conflict in evolutivo_32m_11users.sql.gz Auto-merging evolutivo_32m_5kusers.sql.gz CONFLICT (add/add): Merge conflict in evolutivo_32m_5kusers.sql.gz Automatic merge failed; fix conflicts and then commit the result.

Both conflicting files are the same in both repositories (local and remote) so I just opted for ours with the command

git checkout --ours evolutivo_32m_11users.sql.gz evolutivo_32m_5kusers.sql.gzgit addgit commit

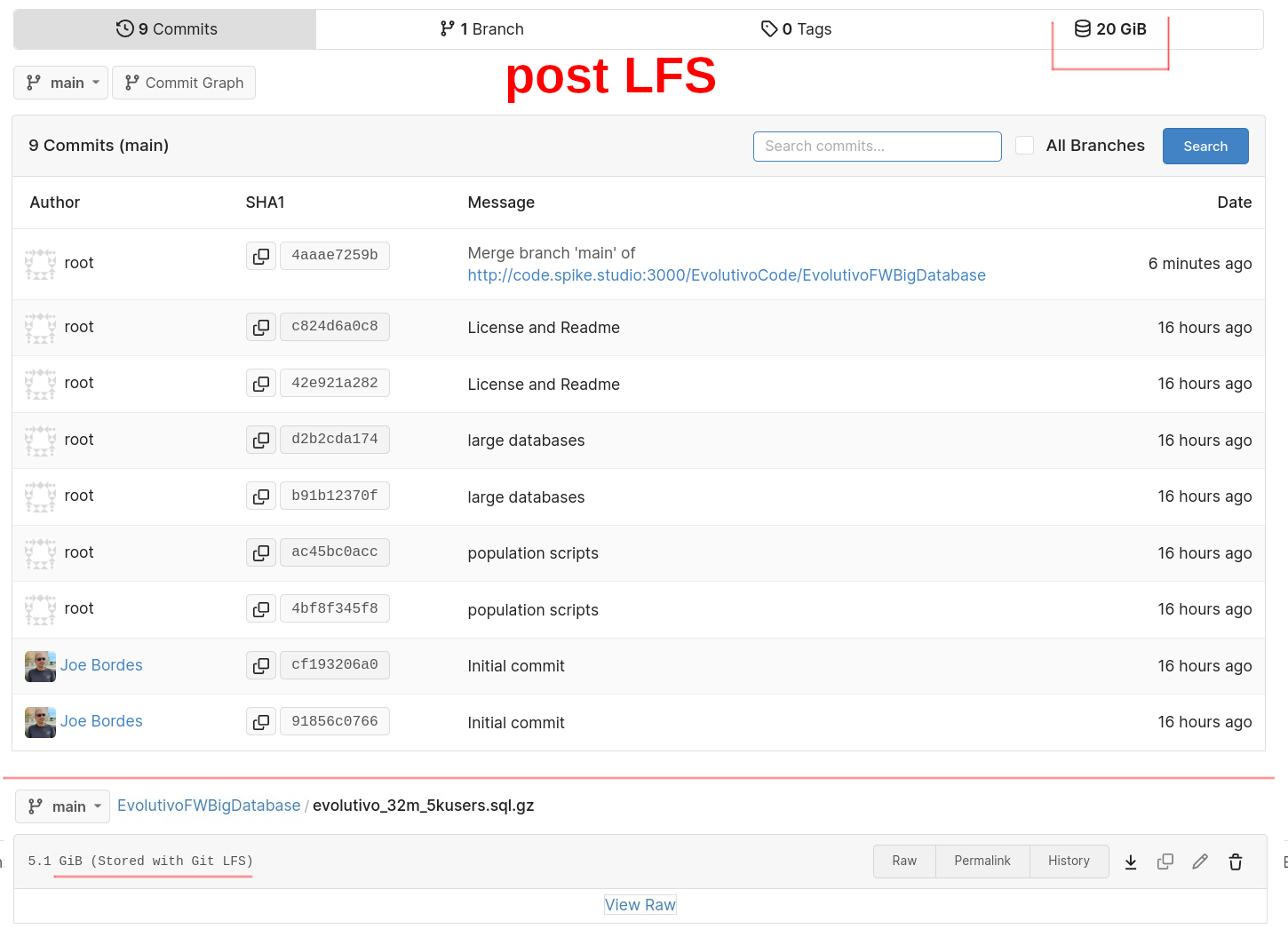

Good. Now I have 5 commits and can push online. The process takes another 10 minutes and I end up with something close to what I wanted but not exactly what I was looking for. My repository history went from this:

to this:

So, yes, I now have the big files stored as LFS links which is what I needed but the migrate command duplicated all the commits and, what is worse duplicated the space I am consuming in the server's hard disk. I went from 10Gb to 20Gb!

data/git/lfs directory as expected. Effortless magic.So, let's trash the whole thing and start clean.

LFS Tracking in a git repository

I delete the repository using Gitea user interface, create it, and clone it again. I add the initial commit and the population scripts and now the LFS tracking.

root@xxx:/.../EvolutivoFWBigDatabase# git lfs track ".sql.gz" Tracking ".sql.gz"

After any invocation of git lfs track or git lfs untrack, you must commit changes to your .gitattributes file, so I add that file and commit it. Now I add the large files as you normally do, commit and push. My repository now looks like what I had expected.

The space in the server is correct and the large files are in the data/git/lfs directory where they should be.

Conclusion

LFS works great and is easy to use and configure

You have to use LFS, it doesn't just happen.

LFS

migrateis a delicate command. As most commands that rewrite commit history, better to stay away from them if you canI noticed that Gitea asks for login credentials for each large file. I suppose each one has its own separate upload process (which sounds correct). Better to use ssh-keys

I also read something about having an upload timeout that can be increased in the Gitea configuration file. I did not need to do this. Your mileage may vary.

Use Gitea! Use Opensource!